Fun with the story-compiler, the scripting interface, and fonts this week. Also discovered a biggish screw-up.

The story-compiler was fairly straightforward. Scene metadata headers had a fair bit of wasted space in them, and that could be compacted down during the compilation phase.

What the story-compiler produces isn’t quite bytecode as most people understand it, but it is much closer to that idea than it is to human-readable data.

In any case, crunching a lot of tokens down into single-character codes saves a whole bunch of space, both on-disk, and in-memory.

And speaking of in-memory space, I discovered a major error.

Two weeks ago, you may recall, I switched internal memory-management models so that scene performances were dynamically loaded and unloaded. It seemed to work well, so I thought little more about it.

Then, on a whim, having finished a bit of other tinkering, I suddenly had this inexplicable urge to set a breakpoint where the performances get unloaded at the end of a scene, and run the game in the debugger.

Urges like this are often a sign that something is nagging at me.

Well, I did those things and discovered that I wasn’t actually releasing the memory used by the performance. Yes, it was a memory leak. I was throwing away references to those objects, but not the objects themselves.

Oops.

It was trivial to fix, and it certainly reduced memory-usage, but I felt quite daft about it. There aren’t very many places in the code where unencapsulated pointers live, and that was one of them.

The other largely involves SDL_ttf and font-management.

Determined not to repeat those mistakes, I worked hard at revising the font system from end-to-end, progressively eliminating anything that smelled like a pointer. I don’t really have any specific objections to pointers, but custom pointers to custom objects that require custom deconstruction, well, that can be a real nuisance when you get it wrong.

Once fonts were even more neatly encapsulated than before, I realised I had some new opportunities. I had the freedom to make more changes, and do things like scale the font according to the window/screen sizes.

Now, there’s two ways I could have gone about handling the UI. The simplest would be to rely on hardware-scaling. That is, decouple the game’s conception of the size of the display-area from the actual size, and use the GPU to scale my virtual display to the actual display.

It’s a technique that (apparently) works well for many people. Not so much, for me.

Argus games are primarily text. You, the player, can also be thought of as “the reader”. You will be spending almost all of your time looking at rendered text.

And the rendered text didn’t survive the hardware-scaling very well. If my virtual screen was larger than the display, it blurred on the downscale. If smaller, it blurred or chunked on the upscale.

Every other aspect of the display looked fine, except the actual text, where I wanted the player’s eyes most of the time.

So, the other way of doing things was to take care of the scaling and positioning of UI elements myself. Take the display numbers and do some arithmetic to figure out where everything should be and how big it should be. That allows the display area to be as little as 1024×700 (about the minimum size we can squeeze everything in) to as large as you can actually display it.

Which is cool, but managing fonts the way I had been meant that the text itself never actually changed size.

I could do better, now that I’d reworked font-management, I thought.

And I did, I believe.

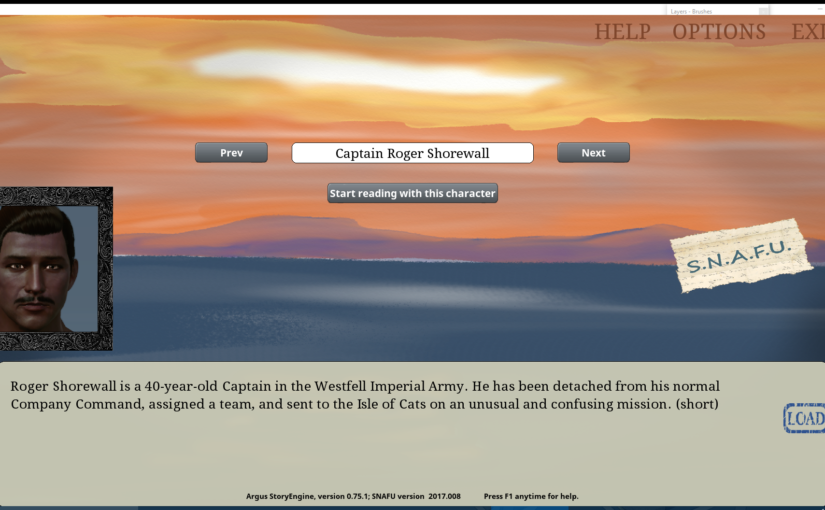

Here’s the UI rendered at 1920×1080 (almost, anyway – it’s windowed):

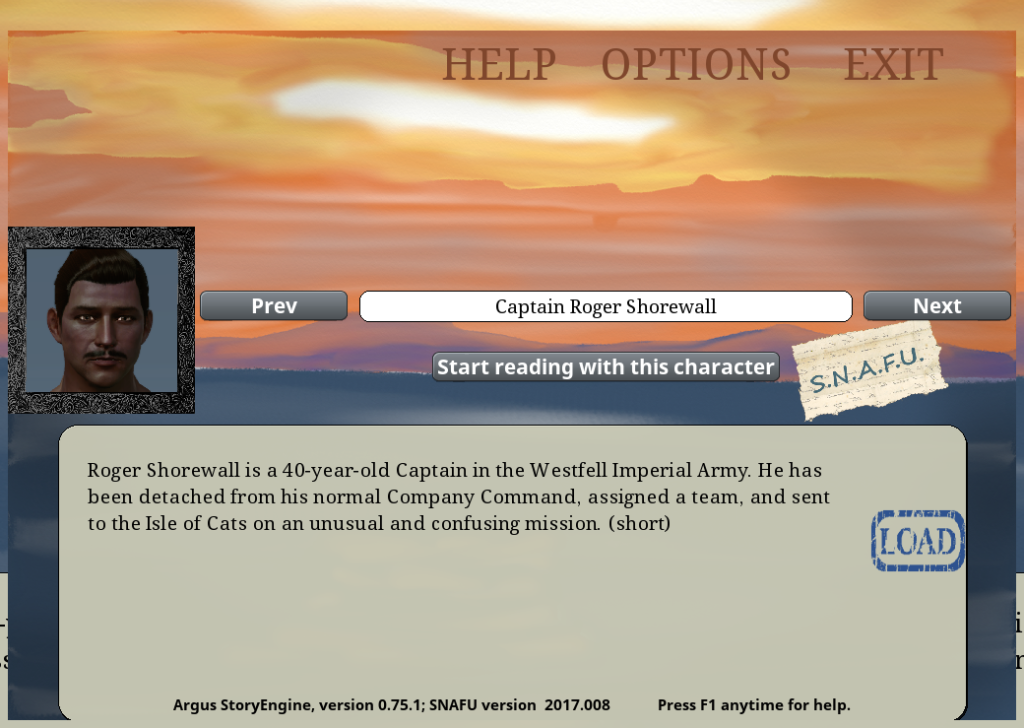

And here it is at 1024×700:

As you can see, various elements have moved and/or resized to accomodate the different display dimensions. (There are a couple screenshot artifacts around the edges. Ignore those)

Adjusting the size of the loaded narrative font (the one with the serifs) has improved the overall clarity of the text in both images, as well as improving readability. For various reasons, the amount of text per line is different.

In general, we can only really estimate the amount of text that will fit in a given rectangle. The font isn’t monospaced, so the number of characters per line depends on exactly which characters there are. Secondly, font-sizes are integers, so we try to get the closest match for a given size of display-rectangle, but it isn’t ever going to be exact.

Kerning was another thing that had to be reworked.

I’ve had kerning issues before.

Manually examining the font reveals that it is (as it should be) packed with kerning information for various character-pairs. SDL_ttf, however, insistently and persistently returns zero when I ask for the kerning offset between any pair of characters. Nobody seems to know why.

So, back in week 31, I built my own kerning table. It worked great, providing pixel offsets (positive or negative) for character pairs in various fonts that I was using. Of course that wouldn’t work if I was changing the font sizes around on the fly. And now I was. One pixel of adjustment means a lot less if the font is, for example, much larger.

So, the kerning table now has percentages-of-advance-width rather than integer pixel offsets, and that seems to work alright, though I’ll have to manually rebuild some of the kerning table as I go.

The last part of the work involved improving the integration of Lua scripts by exposing more engine function to Lua, and coming up with a tidy way of encapsulating all of that.

But I’ve gone on long enough, and perhaps I’ll talk more about that next week, because I’ll certainly be doing more work in that area.

For now, thanks for tuning in again. More next week!